Last Updated: March 14, 2026

A multimeter is one of the most important tools for anyone working with electricity or electronics. Whether you are a beginner or a seasoned technician, understanding multimeter accuracy is essential. This helps you get reliable measurements and avoid costly mistakes. Yet, many users misunderstand what accuracy really means, how it affects results, and why it matters. Let’s explore what multimeter accuracy is, how it is defined, and what you need to know to choose and use a multimeter with confidence.

What Does Multimeter Accuracy Mean?

When you see a specification like “±0.5% + 2 digits” on your multimeter, it is talking about accuracy. In simple words, accuracy tells you how close your measurement is to the true value. No measurement tool is perfect. Every multimeter has a small error. This error is what accuracy describes.

For example, if you measure 100V with a multimeter rated at ±1%, your real value could be between 99V and 101V. The smaller the percentage, the better the accuracy. But numbers can be confusing. It helps to break down how manufacturers define accuracy.

Understanding The Accuracy Formula

Most digital multimeters (DMMs) show accuracy in two parts:

- Percentage of reading (e.g., ±0.8%)

- Number of digits (e.g., ±2 digits)

Percentage of reading means the error depends on the value being measured. Digits refers to the least significant digit your display can show. Both these errors combine to give the total possible error.

For example, if your multimeter says “±1.0% + 2 digits” and you measure 200V, your possible error is:

- 1.0% of 200V = 2V

- Add 2 digits (if the display shows 0.1V steps, that’s 0.2V)

So, your true voltage could be 200V ±2. 2V.

Why Multimeter Accuracy Matters

Accuracy is not just a technical detail; it affects your work and safety. Measuring a car battery, checking mains voltage, or troubleshooting a circuit board—all need trustworthy results.

- Electrical safety: Poor accuracy can miss dangerous faults.

- Component testing: Small errors can lead to wrong diagnoses.

- Calibration: For professional work, you may need proof your multimeter is accurate.

Choosing a more accurate multimeter costs more but reduces uncertainty. Beginners often overlook this, but in critical work, accuracy is essential.

Types Of Multimeter Accuracy

There are several types of accuracy to consider. Not all are obvious from the datasheet.

Basic Accuracy

Basic accuracy is the error under ideal conditions—room temperature, correct battery, and no interference. Most specs show basic accuracy. For example, “0.5% + 1 digit” is common for general-purpose models.

Range Accuracy

Multimeters measure many things: voltage, current, resistance, and more. Each range (for example, 200V vs. 600V) has its own accuracy. Usually, lower ranges are more accurate. Always check the accuracy for the range you use.

Function Accuracy

Each function—like AC voltage, DC current, resistance—has its own accuracy. Measuring DC voltage is often more accurate than AC voltage or current. A beginner may assume all readings are equally accurate, but this is rarely true.

Temperature And Environmental Effects

Most datasheets show accuracy at 23°C ±5°C (room temperature). If your multimeter gets hot or cold, accuracy drops. High humidity or strong magnetic fields can also cause errors. If you work outside or in an industrial area, consider this extra uncertainty.

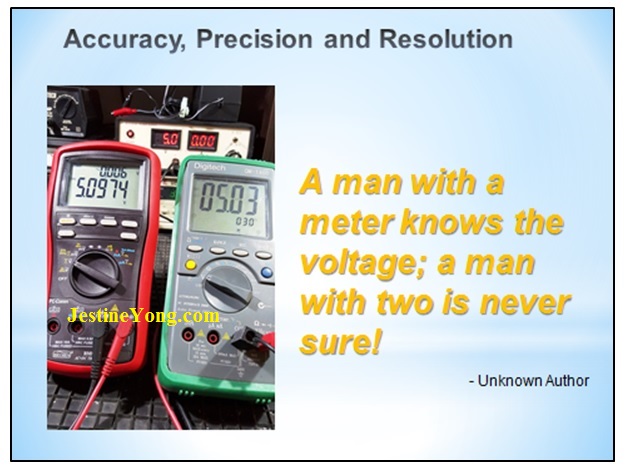

Resolution Vs. Accuracy

Many people confuse resolution (how small a change you can see on the display) with accuracy (how close you are to the real value).

For example, a 3½ digit multimeter may show 0. 01V steps. That is high resolution. But if its accuracy is ±1%, those last digits may not be correct. High resolution does not guarantee high accuracy.

Non-obvious insight: Some multimeters show more digits than their accuracy supports. Always focus on accuracy, not just the number of digits.

Credit: www.designworldonline.com

Examples Of Multimeter Accuracy In Practice

Let’s see how accuracy affects real measurements.

Example 1: Measuring A 1.5v Battery

Suppose your multimeter has “±0. 8% + 2 digits” accuracy on the 2V DC range, and the display resolution is 0. 001V.

- 0.8% of 1.500V = 0.012V

- 2 digits = 0.002V

- Total possible error: 0.014V

So, your reading could be between 1. 486V and 1. 514V.

Example 2: Checking A 230v Ac Outlet

If your meter’s AC voltage accuracy is “±1.2% + 3 digits” on the 600V range, and resolution is 0.1V:

- 1.2% of 230V = 2.76V

- 3 digits = 0.3V

- Total error: 3.06V

So, your reading could be between 226. 94V and 233. 06V.

Example 3: Comparing Two Multimeters

Here is a simple comparison between a cheap and a high-end multimeter:

| Model | DC Voltage Accuracy | AC Voltage Accuracy | Resolution |

|---|---|---|---|

| Budget DMM | ±1.0% + 2 digits | ±1.5% + 4 digits | 0.1V |

| Professional DMM | ±0.05% + 1 digit | ±0.5% + 2 digits | 0.01V |

The professional model is about 20 times more accurate for DC voltage. This matters when you need precise measurements.

Factors That Affect Multimeter Accuracy

Several factors can cause your readings to be less accurate than the datasheet says.

1. Battery Condition

A weak battery can cause errors or unstable readings. Always check your battery indicator.

2. Test Leads

Cheap or damaged leads add resistance and noise. Use good quality leads and check connections.

3. Calibration

All multimeters drift over time. Professional meters need annual calibration to stay accurate. Hobby meters may not offer calibration, but you should check performance with a known reference.

4. User Technique

Touching probes with your fingers, bad contact points, or measuring in a noisy area can add errors. Always use correct technique.

5. Temperature And Humidity

As discussed earlier, extreme conditions reduce accuracy. If you need precise results, measure in a stable, normal environment.

Credit: www.youtube.com

Interpreting Multimeter Accuracy Specifications

Datasheets often use technical language. Here’s how to read the most common specs:

- ±0.5% + 2 digits: The ±0.5% refers to the measured value. The “+2 digits” refers to the last displayed digits.

- Operating temperature: The best accuracy is within a certain temperature range.

- Calibration period: How often the meter needs recalibration to maintain accuracy.

Understanding these terms helps you pick the right tool and trust your measurements.

Accuracy Classes And Their Uses

Different jobs need different accuracy. Here are typical classes:

| Accuracy Class | Typical Usage | Example Accuracy |

|---|---|---|

| Basic (CAT I, II) | Home, hobby | ±1% to ±2% |

| Professional (CAT III, IV) | Industrial, lab | ±0.05% to ±0.5% |

| Reference Standard | Calibration labs | ±0.01% or better |

Non-obvious insight: Buying the most accurate meter is not always best. For basic home use, ±1% is enough. For electronics design, ±0.1% or better is worth the investment.

Common Myths And Mistakes About Multimeter Accuracy

Many beginners believe some common myths:

- “If my meter shows more digits, it is more accurate.” (Wrong—digits are resolution, not accuracy.)

- “Accuracy is always the same, no matter what I measure.” (Wrong—accuracy changes by function and range.)

- “A new meter is always accurate.” (Wrong—meters can be out of calibration from the start.)

To avoid these mistakes, always check the datasheet, understand the specs, and test your meter against a reference if possible.

How To Improve Measurement Accuracy

You can get better results by following a few smart tips:

- Use the right range: Always pick the lowest range that covers your value without overloading the meter. This gives the best accuracy.

- Keep batteries fresh: Low battery means high error.

- Check your test leads: Replace old or damaged leads.

- Avoid touching metal parts: This adds body resistance.

- Calibrate regularly: Especially for professional use.

- Take multiple readings: If possible, measure several times and average.

- Work in stable conditions: Avoid measuring in very hot, cold, or humid places.

- Read the display carefully: Know what each digit means for your range.

Credit: jestineyong.com

When Is High Accuracy Necessary?

Not every task needs a highly accurate multimeter. Here are some cases when it does matter:

- Calibrating equipment: Even small errors matter.

- Testing precision electronics: For example, measuring reference voltages in microcontrollers.

- Scientific experiments: Data must be accurate and trustworthy.

But for checking if a battery is charged, or if a wire is live, high accuracy is less important.

Choosing A Multimeter Based On Accuracy

When buying a multimeter, accuracy should match your needs and budget. Here are some steps:

- Define your usage: Home, hobby, professional, or lab.

- Check accuracy specs: Look for DC voltage, AC voltage, current, resistance.

- Balance features and price: More accuracy costs more.

- Read reviews: See what other users say about real-world performance.

- Look for calibration options: For professional work, this is essential.

For a deeper dive on multimeter specifications, you can explore the Multimeter Wikipedia page.

Frequently Asked Questions

What Does “±1.0% + 2 Digits” Mean On My Multimeter?

It means your measurement can be off by 1% of the reading, plus 2 counts of the smallest digit shown on the display. So, if you measure 100V, your result could be between 98. 8V and 101. 2V, depending on resolution.

Is A 3½ Digit Multimeter Accurate Enough For Electronics Work?

For basic electronics, a 3½ digit multimeter (which displays up to 1999) is usually fine. But for sensitive circuits or calibration tasks, a 4½ digit or higher with better accuracy is recommended.

How Often Should I Calibrate My Multimeter?

Professional or industrial meters should be calibrated every year. For home or hobby use, check accuracy with a known source every 1-2 years, or if you notice odd readings.

Does Temperature Affect My Multimeter’s Accuracy?

Yes, most meters are designed for room temperature (about 23°C). If you use the meter in extreme heat or cold, the accuracy drops. Check your meter’s datasheet for the operating range.

Can I Trust The Cheapest Multimeters?

Cheap meters can work for basic checks, but their accuracy and safety may not be reliable. For anything important—like industrial, automotive, or electronics repair—invest in a known brand with clear accuracy specs.

Multimeter accuracy is the foundation of trustworthy measurement. By understanding what the numbers mean, recognizing the limits of your tool, and using good technique, you can work smarter and safer—whether at home, in the lab, or in the field.